Amazon's $25 Billion Anthropic Deal Is Really A Customer Contract

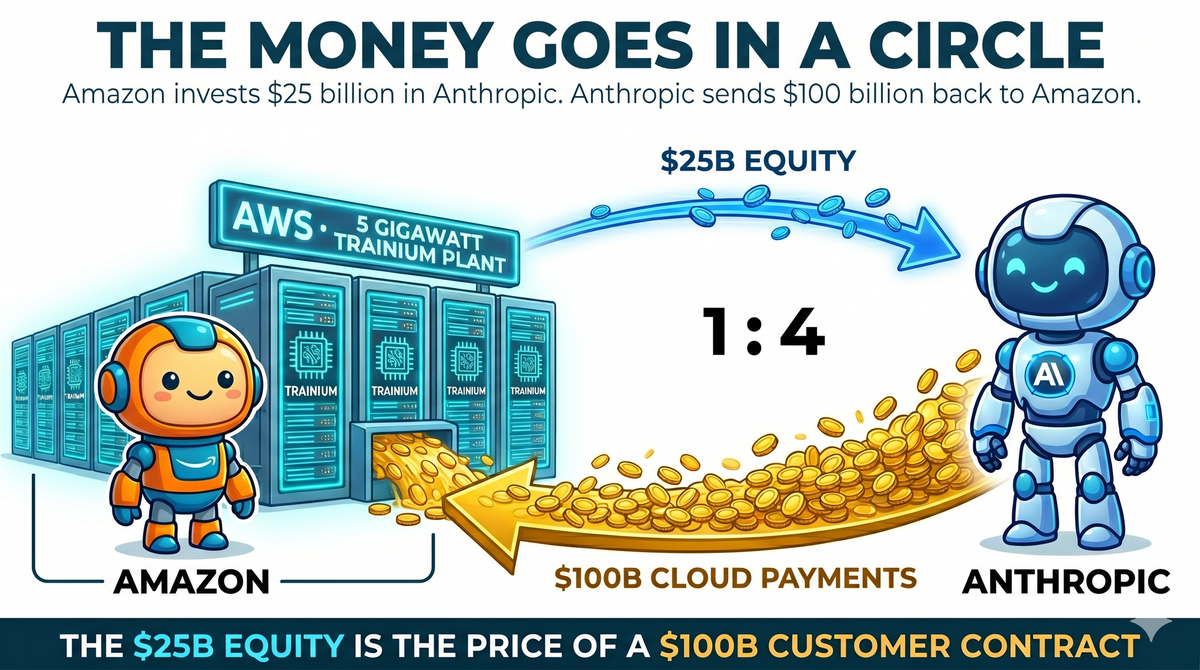

Amazon committed up to $25 billion to Anthropic this week ($5 billion funded immediately, $20 billion tied to milestones). In the same deal, Anthropic committed to spend more than $100 billion on Amazon's cloud over the next 10 years. Most of the coverage is calling this an investment. I think it is more useful to read the $25 billion as the price Amazon paid to secure a $100 billion customer contract.

What Amazon actually gets

Three things matter, in rough order of scale.

The first is the $100 billion in cloud revenue over 10 years, which puts Anthropic on track to become one of AWS's largest single customers.

The second is Anthropic's commitment to run its AI at scale on Amazon's custom AI chips (called Trainium). Most frontier AI labs train on Nvidia's chips. Google is the exception, using its own TPU chips. Until this week, no lab had been willing to bet its roadmap on a non-Nvidia chip built by another company. Anthropic just did. Its engineers are now writing low-level code for Amazon's chip architecture and talking to Amazon's chip team almost every day, and Amazon's Indiana training cluster, anchored by Anthropic, is already running over a million of these chips. That, combined with Google's own TPU buildout, is the first real challenge to Nvidia's grip on AI training at scale.

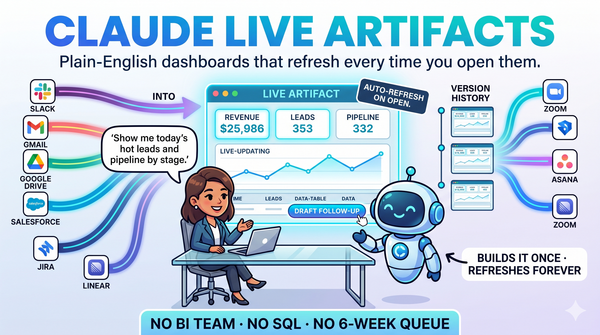

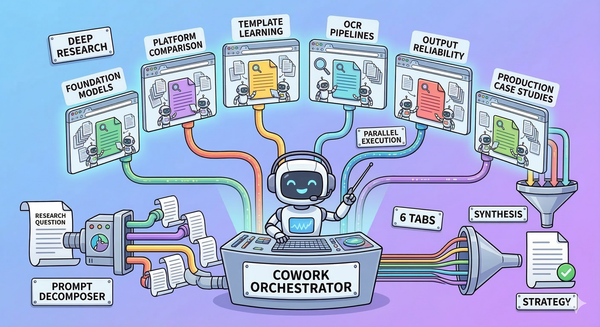

The third is the piece most of the coverage missed: a product called Claude Platform on AWS, flagged in the announcement as "coming soon." Anthropic's main product suite (Claude Code, Claude Cowork, and Live Artifacts) is going to show up as a first-class experience inside the AWS console, billed through an existing AWS account, governed by the same permission controls a company already uses for everything else on AWS. I cannot think of another arrangement like this. OpenAI's models are available on Microsoft's Azure cloud, but the full ChatGPT product is not embedded inside the Azure console. Google runs Gemini inside its own cloud, but no outside AI company has had its working product suite show up as a native surface inside a competitor's cloud. The practical effect is that most large companies, which already have an AWS account, can turn on the Claude product suite without a new vendor contract, a new procurement cycle, or a new billing relationship. The friction of adopting Claude drops close to zero.

Why the velocity matters

Earlier this week I wrote about Anthropic's shipping pace: 36 significant releases across Claude, Cowork, and Claude Code in the 16 weeks since January 1, roughly one every three days. Amazon was voting on that compounding continuing. The $25 billion is a bet that the next 16 weeks look like the last 16, and the 16 weeks after that do too.

The grand spiral continues

The deal is part of a recurring pattern that is starting to look very much like an upwards spiral. Amazon has committed $75 billion in the last eight weeks to two competing AI labs (OpenAI in February, Anthropic this week), with both labs contractually committed to spending multiples of that amount back on Amazon's cloud services. Equity flows in, revenue flows back, and Amazon books both as wins. As with most things, it will work, until it doesn't.

What it actually means

Amazon's $25 billion in equity financing bought it a $100 billion customer contract, an anchor tenant for its non-Nvidia chip business, and a distribution deal that puts Claude inside AWS, which most of the Fortune 500 already uses. Call the equity what you want. Amazon locked in a decade of revenue from the fastest-growing enterprise AI company on earth, and Anthropic locked in the compute it needs to keep shipping new features every three days and doubling the business every quarter. For everyone else, the practical implication is simpler. Claude is about to be one click away inside the cloud console most large companies already use, and the buying question shifts from "should we buy Claude" to "should we turn on Claude."